Cloverleaf’s new grocery shelf displays watch shoppers, track their emotions

Retail tech firm launches first shelf-based dynamic display that tracks and analyzes faces for their emotional states and demographics.

Spy on Any Website

You walk into a grocery store to buy barbeque-flavored potato chips. But you’re disappointed to find that the overflowing shelf of chips — although harboring more than a dozen different types — doesn’t have that flavor.

Within seconds, the LCD digital sign along the shelf edge immediately shows a message: “Don’t worry – BBQ chips will be restocked tomorrow!”

That vision of responsive groceries got closer to reality this week, when retail tech company Cloverleaf announced what it describes as the first digital shelf display with built-in detection of customers’ emotional states and demographics. The system will be presented for the first time at the big National Retail Federation show, starting this weekend in New York City.

The San Diego-based firm, which has focused for 10 years on digital signage for sports stadiums and other venues, had been testing an LED version of its new shelfPoint solution. In the latter part of this year, shelfPoint will be released with displays that instead use LCD because of better resolution and lower cost. This is Cloverleaf’s first shelf-based product.

The dynamic digital displays are placed on the edge of several shelves in a grocery store rack, fed by a 4G-connected media player in the same shelf rack. No special kinds of shelves are needed.

From 10 feet away, Cloverleaf founder and CEO Gordon Davidson told me, a shopper might see animated Coca-Cola bubbles. When five feet away, there might be moving product shots of Coke, and, under five feet, the shopper might see product specifics. The intent is to provide what the company describes as “miniaturized visual marketing campaigns” to attract the shopper.

While other firms offer dynamic displays in store, shelfPoint adds the capability to know something about the person looking at the shelf.

The display along the edge of the top shelf also contains an optical sensor, which visually captures images of the customers standing within five feet and looking at the display. If there are several customers, it picks the one closest to the shelf to characterize the group.

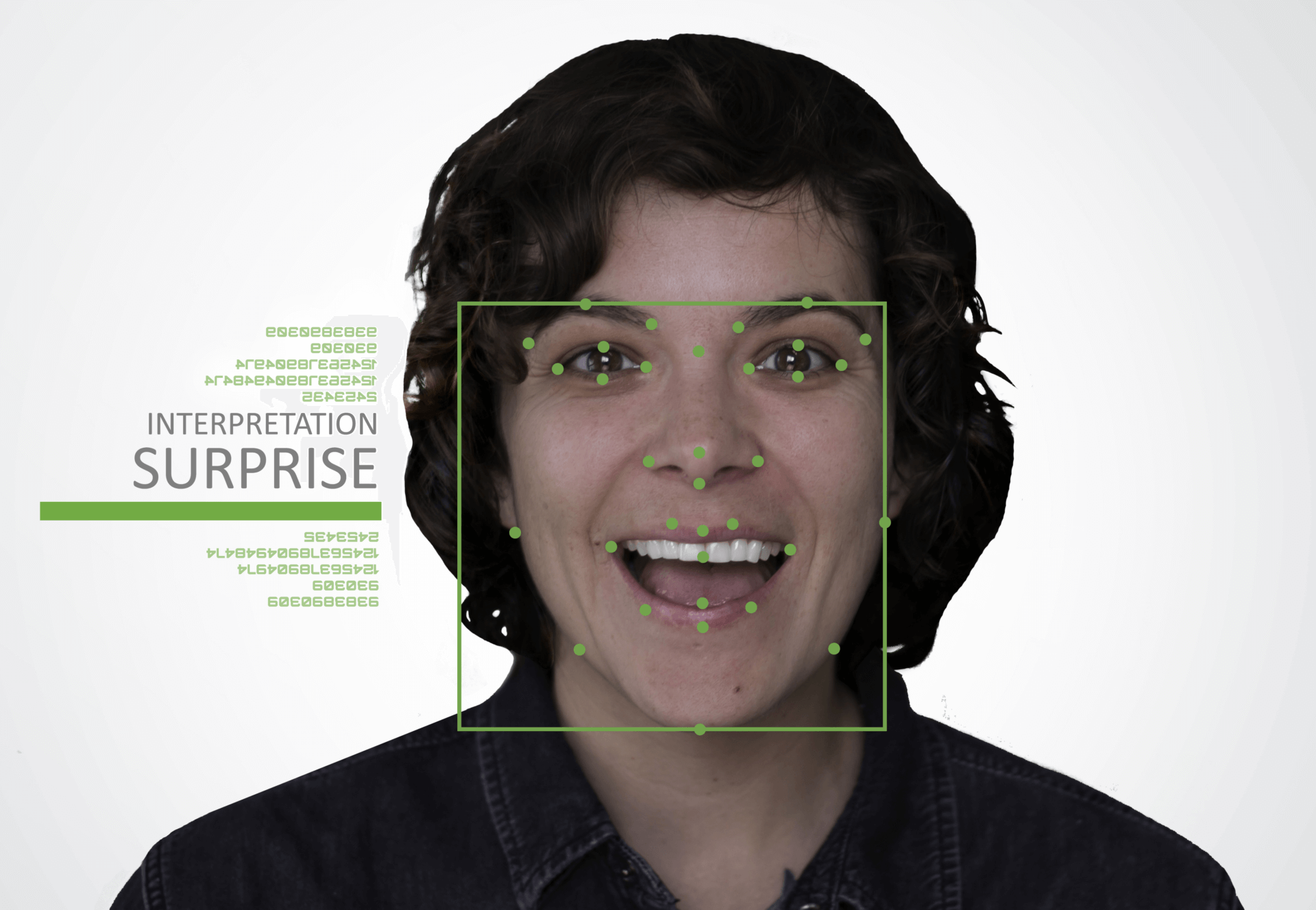

The sensor assesses facial expression points on the face, like the edges of the mouth, the eyes, eyebrows and other points. That data — not the actual image of the person, but a schematic rendition — is then analyzed in Cloverleaf’s cloud by software from facial recognition firm Affectiva.

Shoppers’ expressions are characterized into such categories as “joy,” “sadness,” “anger,” “fear” or “surprise.” Davidson told me that the emotional assessments can help physical stores determine if, say, the product selection, display or pricing pleases the shopper.

There is, however, no way to determine which of those or other factors led you to suddenly laugh out loud while you’re looking for BBQ potato chips, or to determine if your emotional state is left over from a fight you had this morning with your significant other.

In addition, Affectiva assesses the shopper’s age, gender and major ethnic group.

Davidson assured me that no shopper is identified and actual facial images are not stored — only the points on the face and the resulting aggregate analysis, such as 2,000 shoppers stopped in front of this end cap over the weekend, but only 1,300 showed any responses indicating engagement.

Ethnic groupings, he said, are white, black, Hispanic, northern Asian or southern Asian. This breakdown, he said, is intended to help store owners determine if any ethnic-targeted marketing — such as ads on TV shows popular with Hispanics — has been successful.

The basic idea, Davidson said, is to give brick-and-mortar retailers some of the understanding about its customers that online retailers commonly have.

While the current shelfPoint solution does not change the display automatically because of the emotional state of the nearby shopper (such as in my example above), Davidson said that kind of feedback could be offered in the future. The display’s moving imagery is updated remotely. Also in the works: A/B testing.

Davidson said a 10-week pilot test with a major, unnamed grocer in the northeastern US saw an average 37 percent sales lift. But, he acknowledged, this was because of the digital display, not the feedback about, say, all the people who were saddened by not finding BBQ chips.

No data is yet available on whether emotional or demographic analytics make a difference to sales.

Contributing authors are invited to create content for MarTech and are chosen for their expertise and contribution to the martech community. Our contributors work under the oversight of the editorial staff and contributions are checked for quality and relevance to our readers. MarTech is owned by Semrush. Contributor was not asked to make any direct or indirect mentions of Semrush. The opinions they express are their own.

Add us as a preferred source on Google

Google's "preferred sources" feature allows users to customize their search results by selecting news outlets they want to see more often in the "Top Stories" section.

Add Martech Now