PullString drops text-based chat for voice conversations in its new platform

The San Francisco-based company says Converse is the first end-to-end platform for non-tech users to create voice-based Skills for Amazon’s Alexa.

Spy on Any Website

The base of Amazon Echo, the smart speaker home of voice agent Alexa

In September of last year, PullString announced its Author platform for developing text-based chatbots.

A few months after the launch, the firm added the ability to create Skills — that is, conversational third-party applications — for Amazon’s Alexa voice agent.

This week, PullString is launching a new platform called Converse that is solely focused on letting non-technical users create voice-based Skills.

The company says this is the first end-to-end platform for non-technical users to create conversational interactions for Alexa. Converse is also PullString’s first self-service, cloud-based platform, as Author — which has now been discontinued — was desktop-based and required some professional services.

It’s end-to-end, Jacob told me, because it supports design, prototyping, testing and publishing to Amazon. The Author platform, by contrast, required the author to export the final effort and then separately deploy it.

Now, deployment is a button push. CEO Oren Jacob said that competitor Sayspring cannot automatically deploy the final conversation in full form as PullString can.

Converse requires no coding, and components to support developer-authored customization will be added next year. Currently, there’s an all-purpose API for connecting to back-ends like CRMs, campaign management or inventory management, but full integration with popular marketing and management platforms is on the roadmap.

Support for Google Assistant and voice-based applications in IoT devices like dolls or appliances are also in the works. Jacobs said his company decided to focus on voice interaction because, while text interaction isn’t going away, “voice is the future of how humans will connect with computers.”

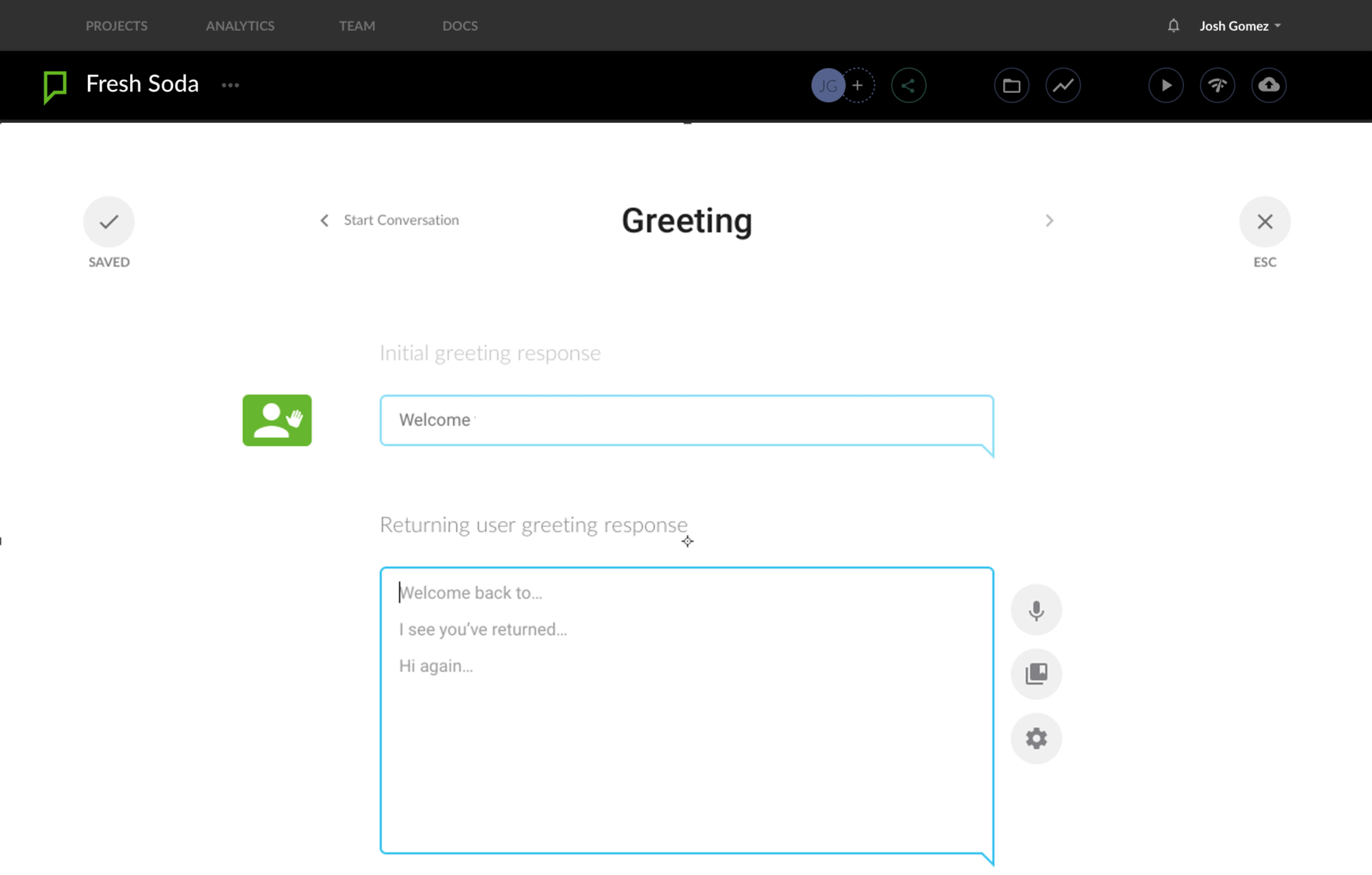

In Converse, a user sets up Conversational Blocks that contain relevant dialog options for a given part of a conversational flow, like Greeting, Branding, Closing or Q & A. The user can then sequence the Blocks in the desired order.

The platform will look for certain words to trigger a response based on Amazon’s intent engine, and the user can set up the “don’t understand” fallback sequence if things get too confusing.

Sound clips like a music excerpt can be added between dialog, the Amazon-generated voice can be modified through Amazon’s tools, and the platform can determine the likelihood that a given conversation is being conducted with a returning person.

Contributing authors are invited to create content for MarTech and are chosen for their expertise and contribution to the martech community. Our contributors work under the oversight of the editorial staff and contributions are checked for quality and relevance to our readers. MarTech is owned by Semrush. Contributor was not asked to make any direct or indirect mentions of Semrush. The opinions they express are their own.

Add us as a preferred source on Google

Google's "preferred sources" feature allows users to customize their search results by selecting news outlets they want to see more often in the "Top Stories" section.

Add Martech Now